Basic information

The Multilingual Natural Language Processing course introduces the fundamentals of AI for automatically processing, understanding, and generating human language. Taught in English, this course introduces Natural Language Processing from foundational concepts to modern Large Language Models. Students begin with words, tokens, tokenization (including BPE), word embeddings and language modeling, and how to evaluate NLP systems’ outputs.

The course progresses to neural networks for NLP, attention, and the Transformer architecture, followed by Large Language Models, their training phases, scaling laws, PEFT, and hands-on applications (we will also cover the making of our Minerva LLM and its current evolution!). Advanced topics include machine translation, generative model evaluation, reinforcement learning for LLMs, Retrieval-Augmented Generation, advanced architectures (MoE, MAMBA), semantics, coreference resolution, and narrative understanding.

It is part of the curricula for the Master’s in AI and Robotics, Engineering in Computer Science and Artificial Intelligence, and Data Science.

Spring 2026

February 27 – May 29, 2026

- Wednesday (10:15 – 12:00)

- Friday (8:30 – 10:45)

Venue: S. Pietro in Vincoli, via delle Sette Sale, 29 (room/aula 41)

All the class material can be found in the dedicated classroom: https://classroom.google.com/c/ODQyOTcxMjM1NTQx

Course Syllabus

Foundations of NLP: words, tokens and language models

- Introduction to the course

- Words & tokens

- Tokenization techniques

- Language models

- Evaluation basics

Machine Learning for NLP

- Machine Learning & classification

- Logistic regression

- Cross-entropy (CE)

- Gradient descent

- Evaluation: Accuracy, Precision, Recall, F-measure

- Train/dev/test sets & statistical significance

Word Representations & Semantics

- Count-based semantics

- Cosine similarity

- Word2vec

- Embedding properties

- Visualization & bias

Neural Networks & Transformer Encoders

- Neural networks & deep learning

- The attention mechanism

- Transformer (1): The Encoder architecture

- From BERT to mmBERT

- Sentence embeddings

Decoders: Large Language Models

- Transformer (2): The Decoder architecture

- Training phases (pretraining, post-training)

- Scaling laws

- PEFT

- Reinforcement learning for LLMs

- Retrieval-Augmented Generation

Advanced Topics & Applications

- Machine Translation

- Evaluation of generative models

- BabelNet and Word Sense Disambiguation

- Semantic Role Labeling and Semantic Parsing

- Coreference Resolution

- Narrative Understanding

- Advanced architectures (MoE, MAMBA)

Exams and Assessment

- Mon 22/6/26, 13:00–18:00 – 108 Marco Polo

- Wed 15/7/26, 8:00–13:00 – 108 Marco Polo

- Tue 22/9/26, 8:00–13:00 – 105 Marco Polo

Upon submission of your homeworks or project (see right box on attending vs. non-attending students), the student will give an oral exam revolving around:

- Homework presentations (overall 10 minutes);

- Starting from your homeworks, theory questions (potentially on the whole course program).

Attending students are students who regularly attend the course. They have to complete two homeworks:

- One to be delivered during the course;

- One to be delivered 10 days before each exam session and, in any case, by the September session.

Non-attending students are students submitting both homeworks at any exam session (including January and February) and/or attending students who failed the homeworks. They must take the full exam (more details in the next slides).

Their homeworks must be delivered 10 days before each exam session (for instance, if the exam date is December 25th, the homework must be delivered by December 15th by midnight++; the ++ means we will not be strict with the submission hour).

Teaching Staff

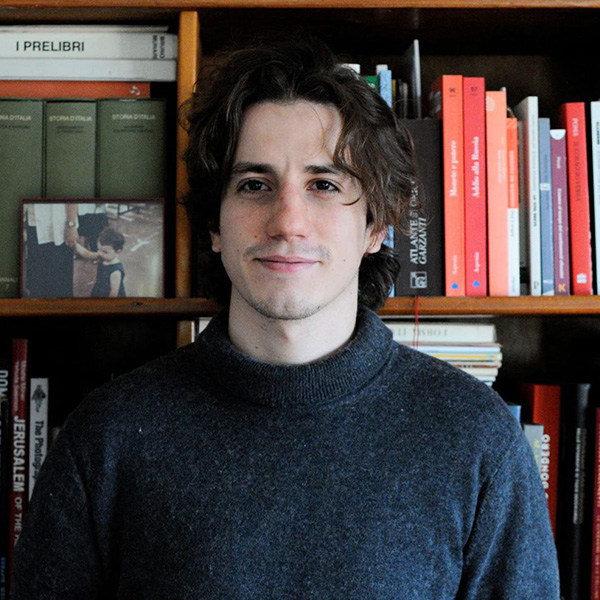

Prof. Roberto Navigli

Office: room B119, via Ariosto, 25

Email: surname chiocciola diag plus uniroma1 plus it (if you are a human being, please replace plus with . and chiocciola with @)

Website: https://www.diag.uniroma1.it/navigli/